- Install spark on windows and run scala programs install#

- Install spark on windows and run scala programs full#

- Install spark on windows and run scala programs download#

Install spark on windows and run scala programs full#

For a full list of options, run Spark shell with the -help option. Locally with one thread, or local to run locally with N threads.

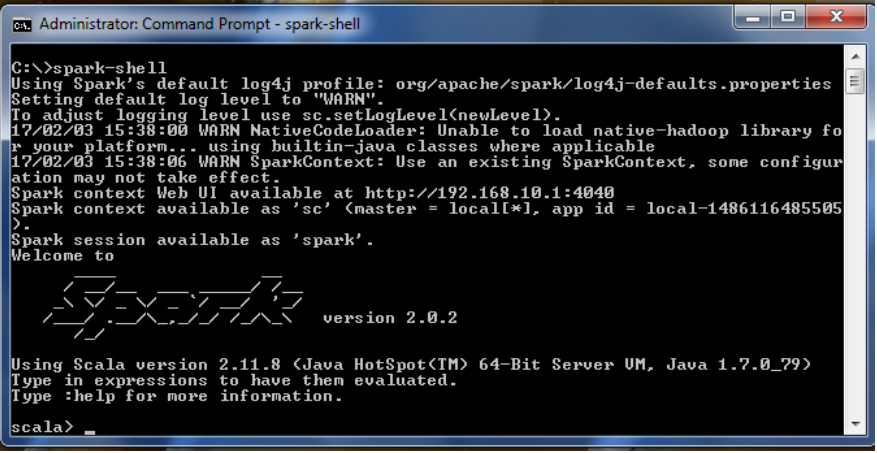

Master URL for a distributed cluster, or local to run Great way to learn the framework./bin/spark-shell -master local You can also run Spark interactively through a modified version of the Scala shell. To run one of the Java or Scala sample programs, useīin/run-example in the top-level Spark directory. Scala, Java, Python and R examples are in theĮxamples/src/main directory. Spark comes with several sample programs. This prevents KubernetesClientException when kubernetes-client library uses okhttp library internally. Support for Scala 2.11 is deprecated as of Spark 2.4.1įor Java 8u251+, HTTP2_DISABLE=true and 2_DISABLE=true are required additionally for fabric8 kubernetes-client library to talk to Kubernetes clusters. Support for Scala 2.10 was removed as of 2.3.0. Note that support for Java 7, Python 2.6 and old Hadoop versions before 2.6.5 were removed as of Spark 2.2.0. You will need to use a compatible Scala version Spark runs on Java 8, Python 2.7+/3.4+ and R 3.5+.

Or the JAVA_HOME environment variable pointing to a Java installation. Locally on one machine - all you need is to have java installed on your system PATH, Spark runs on both Windows and UNIX-like systems (e.g.

Install spark on windows and run scala programs install#

Scala and Java users can include Spark in their projects using its Maven coordinates and in the future Python users can also install Spark from PyPI.

Install spark on windows and run scala programs download#

Users can also download a “Hadoop free” binary and run Spark with any Hadoop version Downloads are pre-packaged for a handful of popular Hadoop versions. Spark uses Hadoop’s client libraries for HDFS and YARN. This documentation is for Spark version 2.4.8. Get Spark from the downloads page of the project website. Please see Spark Security before downloading and running Spark. This could mean you are vulnerable to attack by default. It also supports a rich set of higher-level tools including Spark SQL for SQL and structured data processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming. It provides high-level APIs in Java, Scala, Python and R,Īnd an optimized engine that supports general execution graphs. Apache Spark is a fast and general-purpose cluster computing system.